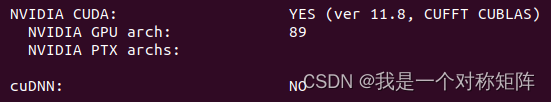

本文介绍: 在windows7 以及OpenCV4 过后可以使用CAP_MSMF读取音频,但是OpenCV没有播放音频的API。代码示例如下。本文解析OpenCVCAP_MSMF进行文件、设备的 音频读取,学习MediaFoundation 的使用。

OpenCV 读取音频代码实例

在windows7 以及OpenCV4 过后可以使用 CAP_MSMF 读取音频,但是OpenCV没有播放音频的API。代码示例如下。 本文解析OpenCVCAP_MSMF 进行文件、设备的 音频读取,学习MediaFoundation 的使用。

#include <opencv2/core.hpp>

#include <opencv2/videoio.hpp>

#include <opencv2/highgui.hpp>

#include <iostream>

using namespace cv;

using namespace std;

int main(int argc, const char** argv)

{

Mat videoFrame;

Mat audioFrame;

vector<vector<Mat>> audioData;

VideoCapture cap;

vector<int> params { CAP_PROP_AUDIO_STREAM, 0,

CAP_PROP_VIDEO_STREAM, -1,

CAP_PROP_AUDIO_DATA_DEPTH, CV_32F };

//cap.open(file, CAP_MSMF, params);

// 打开第一个音频输入设备

cap.open(0, CAP_MSMF, params);

if (!cap.isOpened())

{

cerr << "ERROR! Can't to open file: " + file << endl;

return -1;

}

const int audioBaseIndex = (int)cap.get(CAP_PROP_AUDIO_BASE_INDEX);

const int numberOfChannels = (int)cap.get(CAP_PROP_AUDIO_TOTAL_CHANNELS);

cout << "CAP_PROP_AUDIO_DATA_DEPTH: " << depthToString((int)cap.get(CAP_PROP_AUDIO_DATA_DEPTH)) << endl;

cout << "CAP_PROP_AUDIO_SAMPLES_PER_SECOND: " << cap.get(CAP_PROP_AUDIO_SAMPLES_PER_SECOND) << endl;

cout << "CAP_PROP_AUDIO_TOTAL_CHANNELS: " << cap.get(CAP_PROP_AUDIO_TOTAL_CHANNELS) << endl;

cout << "CAP_PROP_AUDIO_TOTAL_STREAMS: " << cap.get(CAP_PROP_AUDIO_TOTAL_STREAMS) << endl;

int numberOfSamples = 0;

int numberOfFrames = 0;

audioData.resize(numberOfChannels);

mfcap::AudioOutput audioOutput;

audioOutput.Open((int)cap.get(CAP_PROP_AUDIO_TOTAL_CHANNELS),

(int)cap.get(CAP_PROP_AUDIO_SAMPLES_PER_SECOND),

16);

for (;;)

{

if (cap.grab())

{

//cap.retrieve(videoFrame);

std::vector<const unsigned char*> planes;

planes.resize(numberOfChannels);

for (int nCh = 0; nCh < numberOfChannels; nCh++)

{

cap.retrieve(audioFrame, audioBaseIndex+nCh);

if (!audioFrame.empty())

{

audioData[nCh].push_back(audioFrame);

//planes[nCh] = audioFrame.data + nCh * audioFrame.cols;

}

numberOfSamples+=audioFrame.cols;

}

} else { break; }

}

cout << "Number of audio samples: " << numberOfSamples << endl

<< "Number of video frames: " << numberOfFrames << endl;

return 0;

}

打开设备

bool CvCapture_MSMF::open(int index, const cv::VideoCaptureParameters* params)

{

// 先重置环境

close();

if (index < 0)

return false;

if (params)

{

// 开启硬件编解码加速,这里先省略,在后面的硬件加速上学习。

configureHW(*params);

/* configureStream 主要是配置是否捕获音频或视频流

// 如果需要捕获音频流: audioStream = 0 否者 audioStream = -1

// 视频流同理,对应的变量为: videoStream

*/

/* setAudioProperties

// outputAudioFormat: 音频的位深, CV_16S 等

// audioSamplesPerSecond 采样率

// syncLastFrame: 是否需要音视频同步,OpenCV里面只支持视频文件的音视频同步

*/

if (!(configureStreams(*params) && setAudioProperties(*params)))

return false;

}

// 仅支持打开音频流或者视频流,不能在一个对象里面打开或者都不打开。

if (videoStream != -1 && audioStream != -1 || videoStream == -1 && audioStream == -1)

{

CV_LOG_DEBUG(NULL, "Only one of the properties CAP_PROP_AUDIO_STREAM " << audioStream << " and " << CAP_PROP_VIDEO_STREAM << " must be different from -1");

return false;

}

// DeviceList 主要检测当前系统中,视频或音频输入设备的个数,以及激活某一个设备。

DeviceList devices;

UINT32 count = 0;

// 如果是读音频设备就枚举音频输入设备

if (audioStream != -1)

count = devices.read(MF_DEVSOURCE_ATTRIBUTE_SOURCE_TYPE_AUDCAP_GUID);

// 读视频设备同理。值得注意的是,opencv只支持读音频或视频设备

// 如果都设置为0, 则默认读视频

if (videoStream != -1)

count = devices.read(MF_DEVSOURCE_ATTRIBUTE_SOURCE_TYPE_VIDCAP_GUID);

if (count == 0 || static_cast<UINT32>(index) > count)

{

CV_LOG_DEBUG(NULL, "Device " << index << " not found (total " << count << " devices)");

return false;

}

/* getDefaultSourceConfig 这个主要设置硬件加速相关,这里可以先跳过*/

_ComPtr<IMFAttributes> attr = getDefaultSourceConfig();

/*SourceReaderCB 读取回调

设置读取回调,当设置了读取的回调之后,MediaFoundation 读取Source 为异步模式

这个Open函数是读设备的,还有其他重载函数用于读文件

在OpenCV里面,读取设备使用异步模式,读取文件使用同步模式。

*/

_ComPtr<IMFSourceReaderCallback> cb = new SourceReaderCB();

attr->SetUnknown(MF_SOURCE_READER_ASYNC_CALLBACK, cb.Get());

// 激活目标设备

_ComPtr<IMFMediaSource> src = devices.activateSource(index);

if (!src.Get() || FAILED(MFCreateSourceReaderFromMediaSource(src.Get(), attr.Get(), &videoFileSource)))

{

CV_LOG_DEBUG(NULL, "Failed to create source reader");

return false;

}

isOpen = true;

device_status = true;

camid = index;

readCallback = cb;

duration = 0;

// 选择合适的摄像头的流,比如宽高,fps.一般一个摄像头有很多个拉流的格式

// 音频设备同理,比如有不同的采样率,位深等。

if (configureOutput())

{

frameStep = captureVideoFormat.getFrameStep();

}

if (isOpen && !openFinalize_(params))

{

close();

return false;

}

if (isOpen)

{

if (audioStream != -1)

if (!checkAudioProperties())

return false;

}

return isOpen;

}

选择合适的设备流 configureOutput

bool CvCapture_MSMF::configureOutput()

{

// 读取设备所有的流,当然也可以读第一个流

if (FAILED(videoFileSource->SetStreamSelection((DWORD)MF_SOURCE_READER_ALL_STREAMS, false)))

{

CV_LOG_WARNING(NULL, "Failed to reset streams");

return false;

}

bool tmp = true;

// 配置视频流

if (videoStream != -1)

tmp = (!device_status)? configureVideoOutput(MediaType(), outputVideoFormat) : configureVideoOutput(MediaType::createDefault_Video(), outputVideoFormat);

// 配置音频流

if (audioStream != -1)

tmp &= (!device_status)? configureAudioOutput(MediaType()) : configureAudioOutput(MediaType::createDefault_Audio());

return tmp;

}

配置音频流 configureAudioOutput

bool CvCapture_MSMF::configureAudioOutput(MediaType newType)

{

/*FormatStorage 存储设备的流信息,以及挑选最合适的流*/

FormatStorage formats;

formats.read(videoFileSource.Get());

std::pair<FormatStorage::MediaID, MediaType> bestMatch;

formats.countNumberOfAudioStreams(numberOfAudioStreams);

if (device_status)

bestMatch = formats.findBestAudioFormat(newType);

else

bestMatch = formats.findAudioFormatByStream(audioStream);

if (bestMatch.second.isEmpty(true))

{

CV_LOG_DEBUG(NULL, "Can not find audio stream with requested parameters");

isOpen = false;

return false;

}

dwAudioStreamIndex = bestMatch.first.stream;

dwStreamIndices.push_back(dwAudioStreamIndex);

MediaType newFormat = bestMatch.second;

newFormat.majorType = MFMediaType_Audio;

newFormat.nSamplesPerSec = (audioSamplesPerSecond == 0) ? 44100 : audioSamplesPerSecond;

switch (outputAudioFormat)

{

case CV_8S:

newFormat.subType = MFAudioFormat_PCM;

newFormat.bit_per_sample = 8;

break;

case CV_16S:

newFormat.subType = MFAudioFormat_PCM;

newFormat.bit_per_sample = 16;

break;

case CV_32S:

newFormat.subType = MFAudioFormat_PCM;

newFormat.bit_per_sample = 32;

case CV_32F:

newFormat.subType = MFAudioFormat_Float;

newFormat.bit_per_sample = 32;

break;

default:

break;

}

// 初始化流

return initStream(dwAudioStreamIndex, newFormat);

}

初始化流 initStream

bool CvCapture_MSMF::initStream(DWORD streamID, const MediaType mt)

{

CV_LOG_DEBUG(NULL, "Init stream " << streamID << " with MediaType " << mt);

_ComPtr<IMFMediaType> mediaTypesOut;

if (mt.majorType == MFMediaType_Audio)

{

captureAudioFormat = mt;

mediaTypesOut = mt.createMediaType_Audio();

}

if (mt.majorType == MFMediaType_Video)

{

captureVideoFormat = mt;

mediaTypesOut = mt.createMediaType_Video();

}

if (FAILED(videoFileSource->SetStreamSelection(streamID, true)))

{

CV_LOG_WARNING(NULL, "Failed to select stream " << streamID);

return false;

}

HRESULT hr = videoFileSource->SetCurrentMediaType(streamID, NULL, mediaTypesOut.Get());

if (hr == MF_E_TOPO_CODEC_NOT_FOUND)

{

CV_LOG_WARNING(NULL, "Failed to set mediaType (stream " << streamID << ", " << mt << "(codec not found)");

return false;

}

else if (hr == MF_E_INVALIDMEDIATYPE)

{

CV_LOG_WARNING(NULL, "Failed to set mediaType (stream " << streamID << ", " << mt << "(unsupported media type)");

return false;

}

else if (FAILED(hr))

{

CV_LOG_WARNING(NULL, "Failed to set mediaType (stream " << streamID << ", " << mt << "(HRESULT " << hr << ")");

return false;

}

return true;

}

捕获音频帧 Garb()

捕获帧的回调 SourceReaderCB

OpenCV捕获设备数据,采用异步模式,需要自定义一个捕获帧的回调

// 需要继承 IMFSourceReaderCallback

class SourceReaderCB : public IMFSourceReaderCallback

{

public:

// 最大的缓冲帧数量

static const size_t MSMF_READER_MAX_QUEUE_SIZE = 3;

SourceReaderCB() :

m_nRefCount(0), m_hEvent(CreateEvent(NULL, FALSE, FALSE, NULL)), m_bEOS(FALSE), m_hrStatus(S_OK), m_reader(NULL), m_dwStreamIndex(0)

{

}

// COM 接口必须的,感觉就是照着格式写

// IUnknown methods

STDMETHODIMP QueryInterface(REFIID iid, void** ppv) CV_OVERRIDE

{

#ifdef _MSC_VER

#pragma warning(push)

#pragma warning(disable:4838)

#endif

static const QITAB qit[] =

{

QITABENT(SourceReaderCB, IMFSourceReaderCallback),

{ 0 },

};

#ifdef _MSC_VER

#pragma warning(pop)

#endif

return QISearch(this, qit, iid, ppv);

}

STDMETHODIMP_(ULONG) AddRef() CV_OVERRIDE

{

return InterlockedIncrement(&m_nRefCount);

}

STDMETHODIMP_(ULONG) Release() CV_OVERRIDE

{

ULONG uCount = InterlockedDecrement(&m_nRefCount);

if (uCount == 0)

{

delete this;

}

return uCount;

}

// 在 调用wait()的时候 被调用

STDMETHODIMP OnReadSample(HRESULT hrStatus, DWORD dwStreamIndex, DWORD dwStreamFlags, LONGLONG llTimestamp, IMFSample *pSample) CV_OVERRIDE

{

HRESULT hr = 0;

cv::AutoLock lock(m_mutex);

if (SUCCEEDED(hrStatus))

{

if (pSample)

{

CV_LOG_DEBUG(NULL, "videoio(MSMF): got frame at " << llTimestamp);

// 如果缓冲的帧的数量大于最大的数量,需要弹出前面的帧

if (m_capturedFrames.size() >= MSMF_READER_MAX_QUEUE_SIZE)

{

#if 0

CV_LOG_DEBUG(NULL, "videoio(MSMF): drop frame (not processed). Timestamp=" << m_capturedFrames.front().timestamp);

m_capturedFrames.pop();

#else

// this branch reduces latency if we drop frames due to slow processing.

// avoid fetching of already outdated frames from the queue's front.

CV_LOG_DEBUG(NULL, "videoio(MSMF): drop previous frames (not processed): " << m_capturedFrames.size());

std::queue<CapturedFrameInfo>().swap(m_capturedFrames); // similar to missing m_capturedFrames.clean();

#endif

}

m_capturedFrames.emplace(CapturedFrameInfo{ llTimestamp, _ComPtr<IMFSample>(pSample), hrStatus });

}

}

else

{

CV_LOG_WARNING(NULL, "videoio(MSMF): OnReadSample() is called with error status: " << hrStatus);

}

if (MF_SOURCE_READERF_ENDOFSTREAM & dwStreamFlags)

{

// Reached the end of the stream.

m_bEOS = true;

}

m_hrStatus = hrStatus;

if (FAILED(hr = m_reader->ReadSample(dwStreamIndex, 0, NULL, NULL, NULL, NULL)))

{

CV_LOG_WARNING(NULL, "videoio(MSMF): async ReadSample() call is failed with error status: " << hr);

m_bEOS = true;

}

if (pSample || m_bEOS)

{

SetEvent(m_hEvent);

}

return S_OK;

}

STDMETHODIMP OnEvent(DWORD, IMFMediaEvent *) CV_OVERRIDE

{

return S_OK;

}

STDMETHODIMP OnFlush(DWORD) CV_OVERRIDE

{

return S_OK;

}

HRESULT Wait(DWORD dwMilliseconds, _ComPtr<IMFSample>& mediaSample, LONGLONG& sampleTimestamp, BOOL& pbEOS)

{

pbEOS = FALSE;

for (;;)

{

{

cv::AutoLock lock(m_mutex);

pbEOS = m_bEOS && m_capturedFrames.empty();

if (pbEOS)

return m_hrStatus;

if (!m_capturedFrames.empty())

{

CV_Assert(!m_capturedFrames.empty());

CapturedFrameInfo frameInfo = m_capturedFrames.front(); m_capturedFrames.pop();

CV_LOG_DEBUG(NULL, "videoio(MSMF): handle frame at " << frameInfo.timestamp);

mediaSample = frameInfo.sample;

CV_Assert(mediaSample);

sampleTimestamp = frameInfo.timestamp;

ResetEvent(m_hEvent); // event is auto-reset, but we need this forced reset due time gap between wait() and mutex hold.

return frameInfo.hrStatus;

}

}

CV_LOG_DEBUG(NULL, "videoio(MSMF): waiting for frame... ");

DWORD dwResult = WaitForSingleObject(m_hEvent, dwMilliseconds);

if (dwResult == WAIT_TIMEOUT)

{

return E_PENDING;

}

else if (dwResult != WAIT_OBJECT_0)

{

return HRESULT_FROM_WIN32(GetLastError());

}

}

}

private:

// Destructor is private. Caller should call Release.

virtual ~SourceReaderCB()

{

CV_LOG_INFO(NULL, "terminating async callback");

}

public:

long m_nRefCount; // Reference count.

cv::Mutex m_mutex;

HANDLE m_hEvent;

BOOL m_bEOS;

HRESULT m_hrStatus;

IMFSourceReader *m_reader;

DWORD m_dwStreamIndex;

struct CapturedFrameInfo {

LONGLONG timestamp;

_ComPtr<IMFSample> sample;

HRESULT hrStatus;

};

std::queue<CapturedFrameInfo> m_capturedFrames;

};

原文地址:https://blog.csdn.net/qq_30340349/article/details/135463864

本文来自互联网用户投稿,该文观点仅代表作者本人,不代表本站立场。本站仅提供信息存储空间服务,不拥有所有权,不承担相关法律责任。

如若转载,请注明出处:http://www.7code.cn/show_56120.html

如若内容造成侵权/违法违规/事实不符,请联系代码007邮箱:suwngjj01@126.com进行投诉反馈,一经查实,立即删除!

主题授权提示:请在后台主题设置-主题授权-激活主题的正版授权,授权购买:RiTheme官网

声明:本站所有文章,如无特殊说明或标注,均为本站原创发布。任何个人或组织,在未征得本站同意时,禁止复制、盗用、采集、发布本站内容到任何网站、书籍等各类媒体平台。如若本站内容侵犯了原著者的合法权益,可联系我们进行处理。